In this blog post, we will discuss Nvidia’s recent announcements at CES and their strategic partnership with Groq, focusing on their strategy to enhance LLM agent inference. We will explore three main aspects: the importance of KV cache hits, the role of SRAM in improving decoding speed, and a proposed hardware-software architecture that potentially speeds up agent inference to real-time.

Prerequisite: LLM inference basics. Suggested reading: LLM Inference; KV Cache Offloading with LMCache; LLM Agent with KV Cache

CES Announcements and Groq Acquisition

In CES 2026, NVIDIA introduced the Rubin architecture. As described by this announcement This new GPU architecture is designed to significantly boost the performance of large language model (LLM) inference by 10x. It incorporates NVIDIA Vera CPU, Rubin GPU, NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU and Spectrum-6 Ethernet Switch. It natively supports the Inference Context Memory Storage Platform ICMS, which stores KV cache for contexts that allow future reuse.

Moreover, NVIDIA announced a strategic partnership with Groq, including a non-exclusive technology licensing agreement. Groq, a company known for its high-performance AI accelerators utilizing SRAM extensively, has an LPU architecture highly optimized for low-latency inference. This collaboration allows NVIDIA to integrate similar low-latency technologies into their ecosystem.

Immediate Comments on the News

From the authors’ point of view, the new architecture is basically adding a KV cache layer to the existing GPU platform. The ideas are similar to the previous post about LMCache, but Nvidia have also added some additional hardware components such as the DPUs (Data Processing Unit).

On the Groq side, this acqui-hire may be a defensive move for Nvidia to reduce competitors. But regardless, ultra-low latency inference is crucial for real-time agents. Groq’s architecture is well-suited for this purpose and potentially can be integrated into future NVIDIA platforms.

These movements demonstrate Nvidia’s investments into inference, which it has been talking about since 2025. This aligns with the problem faced by Nvidia’s biggest end customer: Frontier Labs including OpenAI, Anthropic, … One of the main doubts for these frontier labs before they raise more capital is their profitability. While people are racing for the best capability on benchmarks and providing training services like Tinker, so far inference is the only cash cow for them. Moreover, the newest release of Opus-4.6 and GPT-5.3-Codex both have fast-inference deployment options on Cerebras. This suggests that the industry is moving towards real-time agents.

There is usually a tradeoff between speed and cost. For example, Opus-4.6 fast is ~5x more expensive than the regular version on Cursor. As the biggest chip provider, Nvidia sits at the best position to attain the best speed-cost tradeoff for real-time agents by providing the most optimized hardware and software stack for inference. We will expand into the discussion on how we can potentially build out the next generation agent inference platform by hardware-software co-design for both Prefill and Decode stages.

Importance of KV Cache Hits

In our previous blog post, we disussed the significance of KV cache hits in enhancing the efficiency of LLM agents. By storing previously computed KV Cache, agents can avoid redundant computations, leading to faster response times and reduced computational load.

The danger of a cache non-hit for any agent LLM trace is that it has to do the prefill for all the previous tokens again. For example, if an agent misses the previous KV cache for a 100K token context, it has to redo the prefill. On xx GPU with balba, prefill takes xx seconds. Since prefill is not batched, this will also block other tasks on the same GPU from being scheduled. Given that each agent can easily go over 20 turns, a few times of KV cache re-prefill can easily inflate the total latency by multiple times.

However, if we are able to save and reuse the KV cache for long contexts, we can significantly reduce the full prefill time. For each round of the agents, we only need to do incremental prefill for the newly added user and agent messages. This can lead to substantial speedups by reduced computation.

Decoding Speed and the Memory Bottleneck

However, we did not go into full depth of decoding in agent inference.

Agents on many categories often have . We give a brief breakdown of the time spent in prefill and decoding for a SWE-agent task assuming this is the only task on the set of GPUs. Although decoding is often executed in batched manner to increase total throughput of the system, the latency experienced by each individual user will still be very long if there are many decoding tokens.

Below is a breakdown of time spent in prefill and decoding for a SWE-agent task assuming single request and perfect KV cache hit. We run GPT-5.2 on mini-SWE-agent tasks and record the time spent in prefill and decoding. The prefill time is measured by the time for incremental prefill, which is the time between the start of incremental prefill and the time when the GPU is ready for decoding. The decoding time is measured by the time between the start of decoding and the time when the GPU finishes generating all tokens.

As shown by the graph, decoding actually takes up to half of the total time given perfect KV cache hits (assuming no contention for OpenAI inference service on President’s Day XD). This is because decoding often involves generating many tokens, especially for complex tasks like software engineering, where the model needs to generate long code snippets.

The key bottleneck for decoding speed is the memory access instead of the actual computation. During decoding, the model needs to frequently load the model weights as well as KV cache. This results in a minimal delay of total_memory / HBM bandwidth for each generated token. Below is a graph on the roofline model for GPU computations. Decoding falls into the memory bound regime, meaning that the execution time is dominated by memory access rather than how fast you can do the computations like matrix multiplication.

Admittedly, speculative decoding as discussed in this blog can help improve the throughput by generating multiple tokens in one forward pass. However, the fundamental bottleneck remains: each forward pass still requires loading weights and KV cache from memory.

Groq’s LPU architecture, which utilizes SRAM for storing model weights, offers a potential solution to this bottleneck. SRAM provides significantly faster access times compared to traditional memory types like HBM or DDR by directly putting memory on chip. This allows them to have 80TB/s bandwidth for SRAM as discussed by the blog. This allows them to have much faster vanilla (without speculative decoding) speed compared to traditional GPU architectures.

What if We Combine these Two?

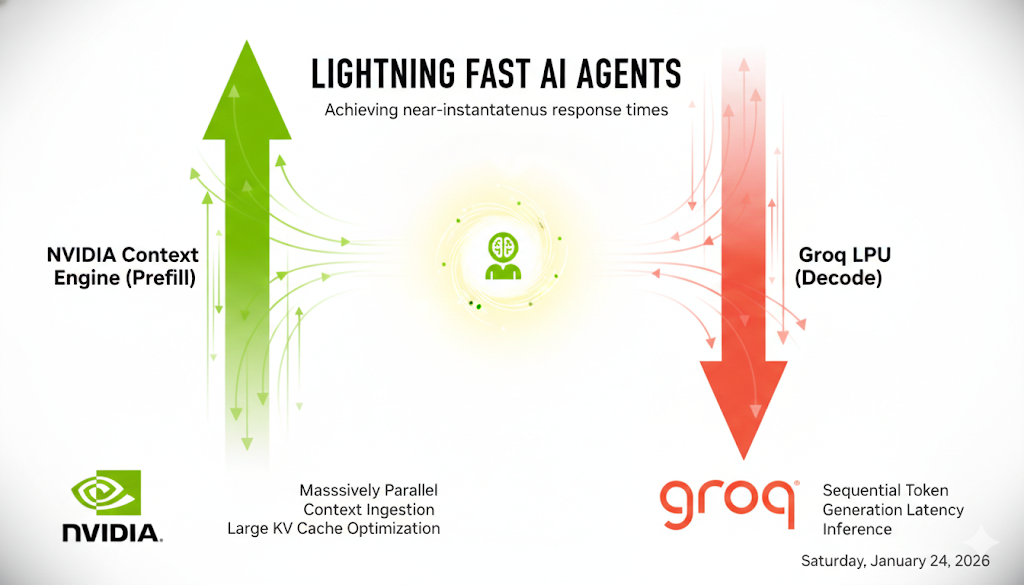

Let’s now assume we are designing the next generation agent inference platform for Nvidia. Given the previous calculations, you will suddenly realize that if we just put the two pieces together: ICMS for KV cache hits and Groq’s SRAM-based architecture for decoding speed, we can potentially achieve real-time agents. Here is a rough sketch of the proposed architecture:

Overall, the architecture we are proposing two components:

- Prefill node, which uses the compute optimized GPUs as compute units for incremental prefill and connects to many storage devices to store KV cache for long contexts.

- Decoding node, which uses specialized hardware that opimizes for memory access to achieve fast decoding.

Below, we will do a simulation demonstrating how this architecture can potentially speed up agent inference from over minutes (now) to real-time (future).

The naive baseline assumes the prefix cache hits only half of the time, for the rest half, it has to do full prefill (we estimate by 7 seconds per turn). The decoding is done on regular GPU without speculative decoding. The proposed architecture assumes perfect KV cache hits all the time and decoding is done on improved hardware with 4x decoding speed as measured by Artificial Analysis report, we estimate prefill time reduction to be 1/3 based on the 1.6x performance improvement per chip estimate here.

The simulated results are quite promising. By enabling full KV cache hits, we reduce the waiting time to a much more waitable gap. If we can further push the decoding speed with new architectures and keep up the current FLOPS improvements, the total inference time can be reduced from over minutes to under 30 seconds. This could greatly enhance the user experience to make sure users stay in the flow state while keeping majority of the computation with relatively cheap hardware (GPUs) instead of using more expensive ones like Cerebras entirely.

Challenges for Realizing the Proposed Architecture

We outline some of the immediate challenges for realizing the proposed architecture:

The integration between Nvidia’s GPU platform and Groq’s LPU architecture. This includes architecture design, data transfer protocols, and other compatibility issues. Author does not work on hardware design and thus cannot comment too much on the specific hardware. But it remains unclear how we can expose software APIs to allow seamless data transfer between the two hardwares.

The software stack for coordinating prefill and decoding nodes. Although the idea of ICMS is promising, software stack for efficiently coordinating between prefill and decoding nodes is non-trivial. This includes design of different caching policies, scheduling systems for balancing delay and throughput under agentic scenarios, and other system-level optimizations. There have been initial effort on software stack for LLM inference such as vLLM, SGLang, LMCache… But customizing them for these specialized hardware workloads will remain challenging. Moreover, utilizing speculative decoding in the new architecture will also require significant software engineering efforts.

Conclusion

There is a natural synergy between Nvidia’s two latest movements. By combining the newly released inference context management storage system for reusing KV cache and groq’s SRAM for rapid decoding, Nvidia actually can achieve significant improvements in agent inference speed. While our proposed architecture is purely theoretical, the recent trends of frontier labs like Anthropic or OpenAI deploying on Cerebras suggest that the industry is moving towards real-time agents. We believe real-time, context-aware agents will soon be no longer a trial product but massively deployed in the next few years with the growing technology.